Best Python Code Graders for CS Teachers in 2026

The Best Python Code Graders for Teaching in 2026 (Compared)

CS educators using AI grading tools report saving 60–80% of their grading time, according to 2025 Gallup benchmark data. That sounds like a win — until you realize most Python code graders automate checking, not teaching. They tell students what broke. They rarely explain why.

Python holds the top spot on the TIOBE Index as the world’s most-taught programming language. Every semester, thousands of instructors assign list comprehensions, recursive functions, and data pipeline projects to growing cohorts. And every semester, grading consumes weekends that should belong to lesson planning, research, or sleep.

The gap isn’t automation — it’s the quality of what gets automated. This comparison unpacks five Python code graders that take meaningfully different approaches to that problem.

What Makes a Python Code Grader Worth Using in 2026?

A Python code grader automatically evaluates student submissions against expected outputs, code quality standards, or test cases — providing scored feedback without manual review.

In 2026, three capabilities separate useful tools from frustrating ones.

Test execution is table stakes. Every grader here runs unit-test or Pytest suites. The differentiator is what happens after tests run. Code quality analysis — through Flake8, Pylint, or Semgrep — catches structural problems that pass/fail testing misses. And pedagogical feedback quality determines whether students learn from the output or just chase green check-marks.

A 2025 SIGCSE survey found that customizability and LMS alignment rank as top priorities when selecting grading tools. But a 2023 arXiv survey revealed that while 81% of automated assessment tools fully automate grading, full automation alone doesn’t improve outcomes unless feedback is actionable.

This comparison weighs five dimensions: Python support depth, LMS integration, feedback quality, scalability, and pricing transparency. Tools like CodeGrader are designed around feedback that teaches, not just scores. Others prioritize infrastructure or integration. The right choice depends on your context.

The 5 Best Python Code Graders for Educators in 2026

These five tools span the current spectrum — from open-source frameworks to full LMS-integrated platforms — each with a distinct philosophy about what grading Python means.

Gradescope — Best for Large University Courses

Best for: Professors managing 100+ student cohorts needing plagiarism detection bundled with auto-grading

Gradescope runs Python autograding through Docker using unit-test decorators like @weight and @visibility. Instructors define weighted test cases with hidden edge cases — partial credit gets assigned based on which tests pass. Answer clustering (powered by Turnitin’s AI) groups incorrect solutions so instructors review patterns rather than 120 individual submissions.

Canvas, Moodle, and Turnitin integrations make Gradescope a natural fit for universities already in those ecosystems. The Docker auto-grader supports custom libraries, so NumPy or Pandas assignments don’t require workarounds.

Honest caveat: Pricing is institutional and opaque; independent educators and adjuncts without departmental budgets will find Gradescope effectively inaccessible

CodeGrade — Best for LMS-Integrated Rubric Grading

Best for: Instructors inside an LMS (Canvas, Moodle) who want a code grader Python educators can deploy without switching platforms

CodeGrade runs Pytest + Semgrep + I/O testing in a single pipeline. A 2025 update added Friendly-Traceback, rendering Python errors in human-readable, multilingual format. Inline comments and peer review workflows set it apart from pure pass/fail systems. Jupyter Notebook support launched August 2025.

Pricing: $39/student/course** (Core) or **$54 with AI Assistant. That transparency is unusual in ed-tech and simplifies departmental budgeting.

Honest caveat: Public reviews on G2 and Capterra remain limited. Setup complexity for custom test configurations can frustrate instructors without testing experience.

Codio — Best for Hands-On Bootcamp and Workforce Training

Best for: Bootcamps and workforce trainers needing a complete environment — IDE, content, and grading — in one platform.

Codio bundles a cloud IDE with nbgrader-based Jupyter integration, Code Playback for keystroke replay, and unlimited cloud VMs. An instructor assigning a data pipeline project watches students code inside the IDE while nbgrader tests against expected DataFrame outputs. Code Playback pinpoints where student logic diverged.

Instructor accounts are free forever. Student licenses: $10/month (workforce)** or **$48/semester (higher ed).

Honest caveat: Steep learning curve. Autograding has been flagged for rejecting valid alternate solutions — a serious issue in Python, where list comprehensions and for-loops solve the same problem correctly. Support reviews are consistently poor.

PrairieLearn — Best for Randomized Assessments

Best for: Instructors prioritizing academic integrity through question randomization.

PrairieLearn generates randomized question variants per student — different values, edge cases, and test inputs. Sharing answers becomes impractical when every student gets a unique problem instance. It’s open-source and free, making it the only tool here with no per-student cost.

Honest caveat: Significant setup overhead. Requires server or cloud deployment, and UX is less polished than commercial alternatives. Instructors without devops experience will need support.

CodeGrader — Best for Instant Pedagogical Feedback (Emerging)

Best for: Independent instructors, bootcamp mentors, and students seeking 24/7 pedagogical feedback without an LMS requirement

CodeGrader was born from a specific frustration: its founder — a teacher — got tired of reviewing 40 repos at 2 AM, knowing each deserved more attention than time allowed. The concept: submit a GitHub or GitLab repo URL (or paste a snippet) and receive a structured AI evaluation covering structure, logic, naming conventions, error handling, and best practices. Each review generates a permanent shareable link students can reference or discuss with mentors.

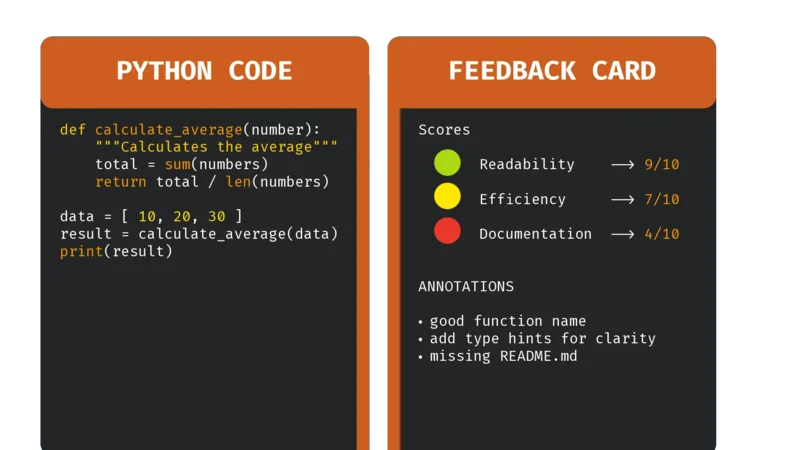

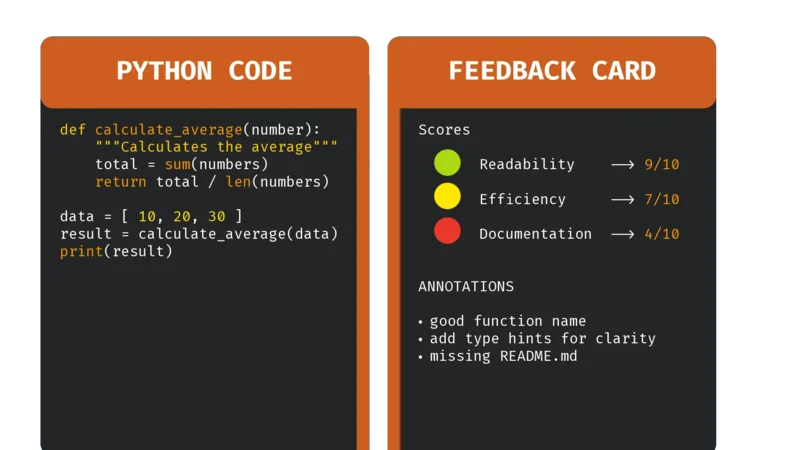

The differentiator is feedback philosophy. Where most tools report pass/fail, CodeGrader is designed to explain why code falls short — the reasoning behind conventions, the logic gap producing wrong output, the error handling a production codebase would require.

Honest framing: CodeGrader is currently exploring this concept and accepting founding members. It doesn’t yet have LMS integrations or a built-in IDE. What it aims to deliver is a faster feedback loop focused on explanation quality over score automation.

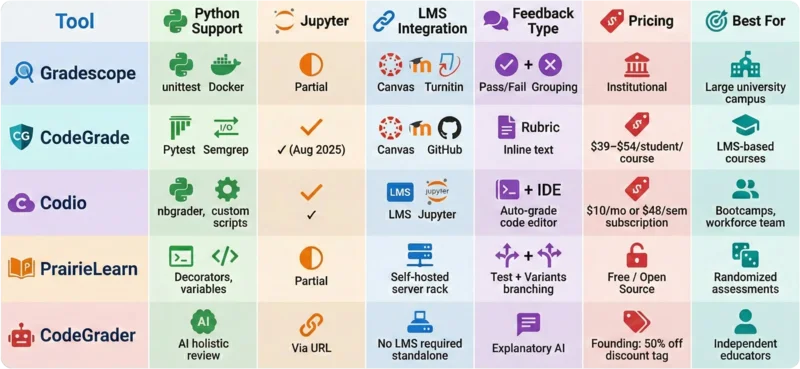

Python Code Grader Comparison: Features at a Glance

Every code grader Python educators evaluate claims Python support. The differences emerge in how deeply they support it.

Three decision points stand out:

Need LMS integration? CodeGrade or Gradescope slot into Canvas and Moodle workflows

Need a built-in IDE? Codio bundles coding environment, content authoring, and grading

Need instant feedback without infrastructure? CodeGrader removes the LMS dependency and focuses on explanation quality

What Top Python Graders Still Get Wrong

Three systemic gaps persist across today’s tools:

Feedback explains what failed, not why it matters. Pass/fail results don’t build mental models. ACM research found that combining output testing with code pattern analysis improves first-year CS retention — but only when paired with explanatory feedback

Rigid test matching rejects creative solutions. Python’s flexibility means a problem can be solved multiple ways. When a python code grader only checks one expected output pattern, correct-but-unconventional submissions get marked wrong

Feedback arrives after the learning window closes. Students receiving reviews days later have moved on. A 2024 arXiv study found AI graders are 44% more consistent than human re-graders — but consistency means nothing if feedback arrives too late to change behavior.

The ideal solution combines test execution with NLP-quality explanations delivered within the learning session. That’s the gap CodeGrader is designed to address.

How to Choose the Right Python Code Grader

Your choice comes down to one of three scenarios.

Scenario A — University, 100+ students, LMS required. Gradescope or CodeGrade. Both integrate with Canvas and Moodle. Gradescope bundles plagiarism detection; CodeGrade offers stronger rubric-based inline feedback at $39–$54/student/course.