Articles-First RAG: How to not Classify And Cut Chatbot Latency in Half

Articles-First RAG: How to not Classify And Cut Chatbot Latency in Half

Why Classification-First RAG Is Slower Than It Needs to Be

Open any RAG tutorial and the pattern looks the same: classify the query, route to the right knowledge base, retrieve, answer. Four stages, clean separation, easy to diagram.

The problem is stage one. Classification is typically a full LLM inference call. Before a single vector is fetched, you have already paid for a round trip to an LLM provider just to decide which bucket the question belongs to. On a content-heavy site, where 80 to 90 percent of questions are about published articles anyway, you are spending that latency to confirm what you already know most of the time.

The workflow I run started out this way. Three knowledge bases (articles, bio, legal), a classifier node up front, three routes downstream. Every question, even the obvious “what does this article say about CV rework”, ate a classification call before retrieval even began.

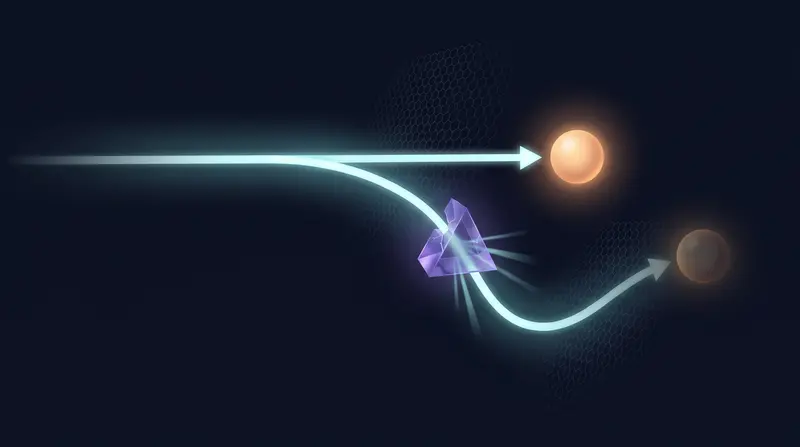

The core idea that reshaped the workflow: if retrieval against the articles KB succeeds with a decent similarity score, you never needed to classify in the first place. Skip the call, go straight to the index, and only fall back to classification when the articles index comes up empty.

Here is what the classification-first flow actually looked like.

Three Knowledge Bases, One Routing Strategy

The production workflow is an advanced-chat pipeline, currently at V2.2, running behind a public site. It handles three kinds of questions: questions about articles I have written, questions about me (bio, background, contact), and questions about legal pages (terms, privacy, cookies). Each lives in its own knowledge base with its own chunking and embedding config. I kept them separate because retrieval quality degrades fast when you mix a 2000-word technical article with a 40-line “About” blurb and a legal disclaimer. Mixed embeddings pull the wrong chunks for the wrong questions, and one-size-fits-all chunk sizes make every branch worse.

The routing strategy is the part most tutorials get backwards. Instead of starting with a question classifier that decides which knowledge base to hit, the workflow hits the articles KB first, unconditionally. Articles are where roughly 80% of real traffic lands, since that is what the chatbot is advertised to do from the article pages themselves. If the articles retrieval returns a non-empty result above the score threshold, the workflow goes straight to the answer-generation node with an articles-specific prompt. No classifier call. No router LLM. One retrieval, one generation.

Classification only runs on the miss path. If articles retrieval returns nothing usable, then the workflow invokes a question classifier node to decide between bio, legal, or a polite fallback. That classifier call is the expensive one, and now it only runs for the minority of queries that are not about articles.

The frontend is a Quarkus backend serving a SaaS landing plus an authenticated dashboard, with MinIO for asset storage, all on a small k3s cluster. The chatbot is embedded on every public article page, which is exactly why articles-first is the right default.

flowchart TD

A([User Input]) --> B[Articles KB Retrieval]

B --> C{Results Found?}

C -- TRUE --> D[Template Node Format: Title, URL, Snippet]

D --> E[Article LLM Prompt]

E --> F([Answer])

C -- FALSE --> G[Classification LLM Bio or Legal?]

G -- Bio --> H[Bio KB Retrieval]

G -- Legal --> I[Legal KB Retrieval]

H --> J[Bio LLM Prompt]

I --> K[Legal LLM Prompt]

J --> L([Answer])

K --> M([Answer])

style A fill:#4A90D9,color:#fff,stroke:none

style F fill:#27AE60,color:#fff,stroke:none

style L fill:#27AE60,color:#fff,stroke:none

style M fill:#27AE60,color:#fff,stroke:none

style C fill:#E67E22,color:#fff,stroke:none

style G fill:#8E44AD,color:#fff,stroke:none

style B fill:#2C3E50,color:#fff,stroke:#4A90D9

style D fill:#2C3E50,color:#fff,stroke:#4A90D9

style E fill:#2C3E50,color:#fff,stroke:#4A90D9

style H fill:#2C3E50,color:#fff,stroke:#8E44AD

style I fill:#2C3E50,color:#fff,stroke:#8E44AD

style J fill:#2C3E50,color:#fff,stroke:#8E44AD

style K fill:#2C3E50,color:#fff,stroke:#8E44AD

Building the Routing Logic: IF/ELSE on Retrieval Results

The workflow has four nodes on the happy path: Start → Knowledge Retrieval (articles KB) → IF/ELSE → LLM. No classifier at the top. The user query goes straight into the articles knowledge base.

The IF/ELSE node checks a single condition:

{{#knowledge_retrieval.result#}} is not empty

That is it. The platform returns an empty array when no chunk clears the score threshold (I run it at 0.5 with hybrid search, weight 0.7 semantic / 0.3 keyword). Non-empty means the articles KB had something relevant. Empty means fall back.

TRUE branch, format and answer

On the TRUE branch, a template node shapes the chunks into a structured context block before the LLM call:

{% for chunk in knowledge_retrieval.result %}

---

Title: {{ chunk.metadata.title }}

URL: {{ chunk.metadata.source_url }}

Excerpt: {{ chunk.content }}

{% endfor %}

The LLM prompt on this branch is article-specific. It tells the model: “You are answering from published articles. Always cite the article title and include the source URL in your answer. If the excerpts do not fully answer the question, say so, do not improvise.” That citation instruction only makes sense when the source is articles, which is why this prompt lives here and not upstream.

FALSE branch, classify, then retrieve again

The FALSE branch is where the classifier finally shows up, and only here. A small LLM node tags the query as bio or legal, then a second knowledge retrieval node hits the matching domain KB. A different LLM prompt handles the answer: no URL citations (domain KBs are internal reference material, not public articles), more conservative tone, explicit “consult a professional” disclaimer on the legal branch.